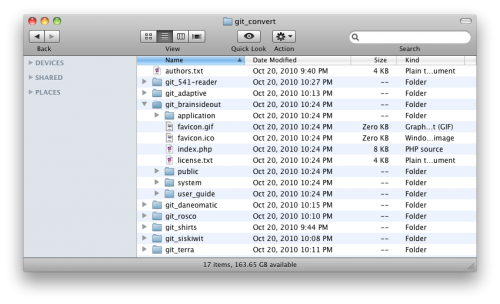

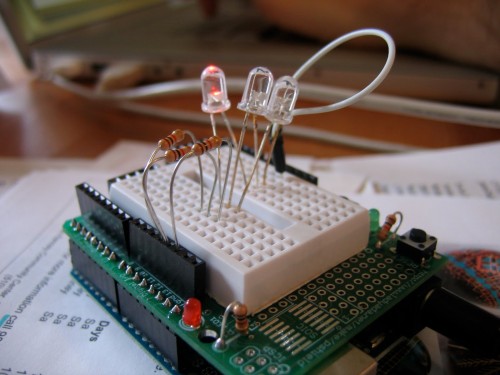

My work with Hans and Umbach on physical computing and embodied interaction took an interesting turn recently, down a path I hadn’t anticipated when I set out to pursue this project. My initial goal with this independent study was to develop the skills necessary to work with electronics and physical computing as a prototyping medium. In recent years, hardware platforms such as Arduino and programming environments such as Wiring have clearly lowered the barrier of entry for getting involved in physical computing, and have allowed even the electronic layman to build some super cool stuff.

Rob Nero presented his TRKBRD prototype at Interaction 10, an infrared touchpad built in Arduino that turns the entire surface of one’s laptop keyboard into a device-free pointing surface. Chris Rojas built an Arduino tank that can be controlled remotely through an iPhone application called TouchOSC. What’s super awesome is that most everyone building this stuff is happy to share their source code, and contribute their discoveries back to the community. The forums on the Arduino website are on fire with helpful tips, and it seems an answer to any technical question is only a Google search away. SparkFun has done tremendous work in making electronics more user-friendly and approachable, offering suggested uses, tutorials and data sheets right alongside the components they sell.

Dourish and Embodied Interaction: Uniting Tangible Computing and Social Computing

In tandem with my continuing education with electronics, I’ve been doing extensive research into embodied interaction, an emerging area of study in HCI that considers how our engagement, perception, and situatedness in the world influences how we interact with computational artifacts. Embodiment is closely related to a philosophical interest of mine, phenomenology, which studies the phenomena of experience and how reality is revealed to, and interpreted by, human consciousness. Phenomenology brackets off the external world and isn’t concerned with establishing a scientifically objective understanding of reality, but rather looks at how reality is experienced through consciousness.

Paul Dourish outlines a notion of embodied interaction in his landmark work, “Where The Action Is: The Foundations of Embodied Interaction.” In Chapter Four he iterates through a few definitions of embodiment, starting with what he characterizes as a rather naive one:

“Embodiment 1. Embodiment means possessing and acting through a physical manifestation in the world.”

He takes issue with this definition, however, as it places too high a priority on physical presence, and proposes a second iteration:

“Embodiment 2. Embodied phenomena are those that by their very nature occur in real time and real space.”

Indeed, in this definition embodiment is concerned more with participation than physical presence. Dourish uses the example of conversation, which is characterized by minute gestures and movements that hold no objective meaning independent of human interpretation. In “Technology as Experience” McCarthy and Wright use the example of a wink versus a blink. While closing and opening one’s eye is an objective natural phenomena that exists in the world, the meaning behind a wink is more complicated; there are issues of the intent of the “winker”, whether they intend for the wink to represent flirtation, collusion, or whether they simply had a speck of dirt in their eye. There are also issues of interpretation of the “winkee”, whether they perceive the wink, how they interpret the wink, and whether or not they interpret it as intended by the “winker.”

Thus, Dourish’s second iteration on embodiment deemphasizes physical presence while allowing for these subjective elements that do not exist independent of human consciousness. A wink cannot exist independent of real time and real space, but its meaning involves more than just its physicality. Indeed, Edmund Husserl originally proposed phenomenology in the early 20th century, but it was his student Martin Heidegger who carried it forward into the realm of interpretation. Hermeneutics is an area of study concerned with the theory of interpretation, and thus Heidegger’s hermeneutical phenomenology (or the study of experience and how it is interpreted by consciousness) has rather become the foundation of all recent phenomenological theory.

Beyond Heidegger, Dourish takes us through Alfred Schutz, who considered intersubjectivity and the social world of phenomenology, and Maurice Merleau-Ponty, who deliberately considered the human body by introducing the embodied nature of perception. In wrapping up, Dourish presents a third definition of embodiment:

Embodied 3. “Embodied phenomena are those which by their very nature occur in real time and real space. … Embodiment is the property of our engagement with the world that allows us to make it meaningful.”

Thus, Dourish says:

“Embodied interaction is the creation, manipulation, and sharing of meaning through engaged interaction with artifacts.”

Dourish’s thesis behind “Where The Action Is” is that tangible computing (computer interactions that happens in the world, through the direct manipulation of physical artifacts) and social computing (computer-augmented interaction that involves the continual navigation and reconfiguration of social space) are two sides of the same coin; namely, that of embodied interaction. Just as tangible interactions are necessarily embedded in real space and real time, social interaction is embedded as an active, practical accomplishment between individuals.

According to Dourish, embodied computing is a larger frame that encompasses tangible computing and social computing. This is a significant observation, and “Where The Action Is” is a landmark achievement. But, as Dourish himself admits, there isn’t a whole lot new here. He connects the dots between two seemingly unrelated areas of HCI theory, unifies them under the umbrella term embodied interaction, and leaves it to us to work it out from there.

And I’m not so sure that’s happened. “Where The Action Is” came out nine years ago, and based on the papers I’ve read on embodied interaction, few have attempted to extend the definition beyond Dourish’s work. While I wouldn’t describe his book as inadequate, I would certainly characterize it as a starting point, a signifiant one at that, for extending our thoughts on computing into the embodied, physical world.

From Physical Computing to Notions of Embodiment

For the last two months I have been researching theories on embodiment, teaching myself physical computing, and reflecting deeply on my experience of learning the arcane language of electronics. Even with all the brilliantly-written books and well-documented tutorials in the world, I find that learning electronics is hard. It frequently violates my common-sense experience with the world, and authors often use familiar metaphors to compensate for this. Indeed, electricity is like water, except when it’s not, and it flows, except when it doesn’t.

In reading my reflections I can trace the evolution of how I’ve been thinking about electronics, how I discover new metaphors that more closely describe my experiences, reject old metaphors, and become increasingly disenchanted that this is a domain of expertise I can master in three months. What is interesting is not that I was wrong in my conceptualizations of how electronics work, however, but how I was wrong and how I found myself compensating for it.

While working with a seven-segment display, for instance, I could not figure out which segmented LED mapped to which pin. As I slowly began to figure this out, it did not seem to map to any recognizable pattern, and certainly did not adhere to my expectations. I thought the designers of the display must have had deliberately sinister motives in how their product so effectively violated any sort of common sense interpretation.

To compensate, I drew up my own spatial map, both on paper as well as in my mind, to establish a personal pattern where no external pattern was immediately perceived. “The pin in the upper lefthand corner starts on the middle, center segment,” I told myself, “and spirals out clockwise from there, clockwise for both the segments as well as the pins, skipping the middle-pin common anodes, with the decimal seated awkwardly between the rightmost top and bottom segments.”

It was this personal spatial reasoning, this establishment of my own pattern language to describe how the seven-segment display worked, that made me realize how strongly my own embodied experience determines how I perceive, interact with, and make sense of the world. So long as a micro-controller has been programmed correctly, it doesn’t care which pin maps to which segment. But for me, a bumbling human who is poor at numbers but excels at language, socialization and spatial reasoning, you know, those things that humans are naturally good at, I needed some sort of support mechanism. And that mechanism arose out of my own embodied experience as a real physical being with certain capabilities for navigating and making sense of a real physical world.

Over time this realization, that I am constantly leveraging my own embodiment as a tool to interpret the world, dwarfed the interest I had in learning electronics. I’m still trying to figure out how to get an 8×8 64-LED matrix to interface with an Arduino through a series of 74HC595N 8-bit shift registers, so I can eventually make it play Pong with a Wii Nunchuk. That said, it’s frustrating that every time I try to do something, the chip I have is not the chip I need, and the chip I need is $10 plus $5 shipping and will arrive in a week, and by the way have I thought about how to send constant current to all the LEDs so they’re all of similar brightness because my segmented number “8” is way dimmer than my segmented number “1” because of all the LEDs that need to light up, and oh yeah, there’s an app for that.

Sigh.

Especially when I’m trying to play Pong on my 8×8 LED matrix, while someone else is already playing Super Mario Bros. on hers.

Extending Notions of Embodiment into Design Practice

In accordance with Merleau-Ponty and his work introducing the human body to phenomenology, and the work of Lakoff and Johnson in extending our notions of embodied cognition, I believe that the human body itself is central to structuring the way we perceive, interact with, and make sense of the world. Thus, I aim to take up the challenge issued by Dourish, and extend our notions of embodiment as they apply to the design of computational interactions. The goal of my work is to establish a language of embodied interaction that will help design practitioners create more compelling, more engaging, more natural interactions.

Considering physical space and the human body is an enormous topic in interaction design. In a panel at SXSW Interactive last week, Peter Merholz, Michele Perras, David Merrill, Johnny Lee and Nathan Moody discussed computing beyond the desktop as a new interaction paradigm, and Ron Goldin from Lunar discussed touchless invisible interactions in a separate presentation. At Interaction 10, Kendra Shimmell demonstrated her work with environments and movement-based interactions, Matt Cottam presented his considerable work integrating computing technologies with the richly tactile qualities of wood, and Christopher Fahey even gave a shout-out specifically to “Where The Action Is” in his talk on designing the human interface (slide 50 in the deck). The migration of computing off the desktop and into the space of our everyday lives seems only to be accelerating, to the point where Ben Fullerton proposed at Interaction 10 that we as interaction designers need to begin designing not just for connectivity and ubiquity, but for solitude and opportunities to actually disconnect from the world.

Establishing a Language of Embodied Interaction for Design Practitioners

To recap, my goal is to establish a language of embodied interaction that helps designers navigate this increasing delocalization and miniaturization of computing. I don’t know yet what this language will look like, but a few guiding principles seem to be emerging from my work:

All interactions are tangible. There is no such thing as an intangible interaction. I reject the notion that tangible interaction, the direct manipulation of physical representations of digital information, is significantly different from manipulating pixels on a screen, interactions that involve a keyboard or pointing device, or even touch screen interactions.

Tangibility involves all the senses, not just touch. Tangibility considers all the ways that objects make their presence known to us, and involves all senses. A screen is not “intangible” simply because it is comprised of pixels. A pixel is merely a colored speck on a screen, which I perceive when its photons reach my eye. Pixels are physical, and exist with us in the real world.

Likewise, a keyboard or mouse is not an intangible interaction simply because it doesn’t afford direct manipulation. I believe the wall that has been erected between historic interactions (such as the keyboard and mouse) and tangible interactions (such as the wonderful Siftables project) is false, and has damaged the agenda of tangible interaction as a whole. These interactions exist on a continuum, not between tangible and intangible, but between richly physical and physically impoverished. A mouse doesn’t allow for a whole lot of nuance of motion or pressure, and a glass touch screen doesn’t richly engage our sense of touch, but they are both necessarily physical interactions. There is an opportunity to improve the tangible nature of all interactions, but it will not happen by categorically rejecting our interactive history on the grounds that they are not tangible.

Everything is physical. There is no such thing as the virtual world, and there is no such thing as a digital interaction. Ishii and Ullmer (PDF link) in the Tangible Media Group at the MIT Media Lab have done extensive work on tangible interactions, characterizing them as physical manifestations of digital information. “Tangible Bits,” the title of their seminal work, largely summarizes this view. Repeatedly in their work, they set up a dichotomy between atoms and bits, physical and digital, real and virtual.

The trouble is, all information that we interact with, no matter if it is in the world or stored as ones and zeroes on a hard drive, shows itself to us in a physical way. I read your text message as a series of latin characters rendered by physical pixels that emit physical photons from the screen on my mobile device. I perceive your avatar in Second Life in a similar manner. I hear a song on my iPod because the digital information of the file is decoded by the software, which causes the thin membrane in my headphones to vibrate at a particular frequency. Even if I dive deep and study the ones and zeroes that comprise that audio file, I’m still seeing them represented as characters on a screen.

All information, in order to be perceived, must be rendered in some sort of medium. Thus, we can never interact with information directly, and all our interactions are necessarily mediated. As with the supposed wall between tangible interactions and the interactions that proceeded them, the wall between physical and digital, or real and virtual, is equally false. We never see nor interact with digital information, only the physical representation of it. We cannot interact with bits, only atoms. We do not and cannot exist in a virtual world, only the real one.

This is not to say that talking with someone in-person is the same as video chatting with them, or talking on the phone, or text messaging back and forth. Each of these interactions is very different based on the type and quality of information you can throw back and forth. It is, however, to illustrate that there isn’t necessarily any difference between a physical interaction and a supposed virtual one.

Thus, what Ishii and Ullmer propose, communicating digital information by embodying it in ambient sounds or water ripples or puffs of air, is no different than communicating it through pixels on a screen. What’s more, these “virtual” experiences we have, the “virtual” friendships we form, the “virtual” worlds we live in, are no different than the physical world, because they are all necessarily revealed to us in the physical world. The limitations of existing computational media may prevent us from allowing such high-bandwidth interactions as those allowed by face-to-face interaction (think of how much we communicate through subtle facial expressions and body language), but the fact that these interactions are happening through a screen, rather than at a coffee shop, does not make them virtual. It may, however, make them an impoverished physical interaction, as they do not engage our wide array of senses as a fully in-the-world interaction does.

Again, the dichotomy between real and virtual is false. The dichotomy between physical and digital is false. What we have is a continuum between physically rich and physically impoverished. It is nonsense to speak of digital interactions, or virtual interactions. All interactions are necessarily physical, are mediated by our bodies, and are therefore embodied.

The traditional compartmentalization of senses is a false one. In confining tangible interactions to touch, we ignore how our senses work together to help us interpret the world and make sense of it. The disembodiment of sensory inputs from one another is a byproduct of the compartmentalization of computational output (visual feedback from a screen rendered independently from audio feedback from a speaker, for instance) that contradicts our felt experience with the physical world. “See with your ears” and “hear with your eyes” are not simply convenient metaphors, but describe how our senses work in concert with one another to aid perception and interpretation.

Humans have more than five senses. Our experience with everything is situated in our sense of time. We have a sense of balance, and our sense of proprioception tells us where our limbs are situated in space. We have a sense of temperature and a sense of pain that are related to, but quite independent from, our sense of touch. Indeed, how can a loud sound “hurt” our ears if our sense of pain is tied to touch alone? Further, some animals can see in a wider color spectrum than humans, can sense magnetic or electrical fields, or can detect minute changes in air pressure. If computing somehow made these senses available to humans, how would that change our behavior?

My goal in breaking open these senses is not to arrive at a scientific account of how the brain processes sensory input, but to establish a more complete subjective, phenomenological account that offers a deeper understanding of how the phenomena of experience are revealed to human consciousness. I aim to render explicit the tacit assumptions that we make in our designs as to how they engage the senses, and uncover new design opportunities by mashing them together in unexpected ways.

Embodied Interaction: A Core Principle for Designing the Next Generation of Computing

By transcending the senses and considering the overall experience of our designs in a deeper, more reflective manner, we as interaction designers will be empowered to create more engaging, more fulfilling interactions. By considering the embodied nature of understanding, and how the human body plays a role in mediating interaction, we will be better prepared to design the systems and products for the post-desktop era.